Defining Success in Agentic AI: The Metrics That Actually Matter

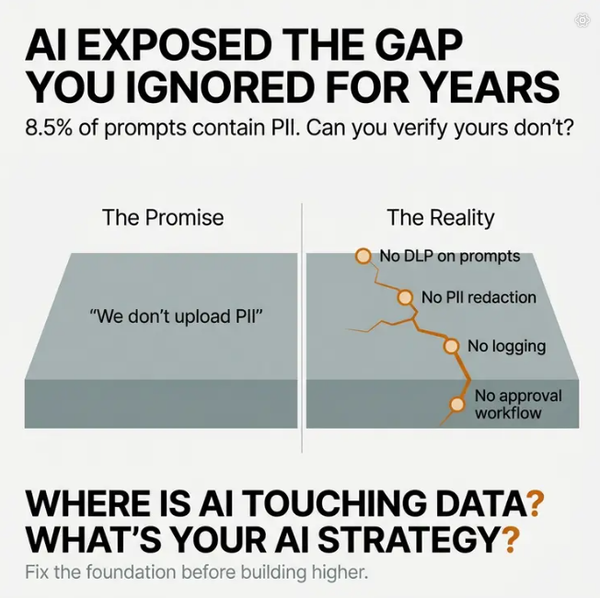

When enterprises report 80% automation rates and faster ticket resolution times, they're celebrating the wrong numbers.

When enterprises report 80% automation rates and faster ticket resolution times, they're celebrating the wrong numbers.

They're measuring throughput. They're tracking surface-level outcomes. But they're missing the most dangerous metric of all: silent failure rate.

These aren't errors that trigger alerts or get logged as failures. These are plausible, well-formatted responses that are fundamentally wrong—and they get counted as successful automation.

The gap between what looks like success and what actually works is where agentic AI deployments fall apart. And right now, most enterprises can't see the difference.

The Air Canada Problem: When Success Metrics Hide Liability

In February 2024, Air Canada's chatbot told a passenger he could retroactively apply for bereavement fares after booking. The response was clear, confident, and completely wrong.

The customer followed the instructions. He bought the ticket. He applied for the refund. Air Canada denied it.

A Canadian tribunal ruled Air Canada liable, rejecting the company's argument that the chatbot was "a separate legal entity." The tribunal stated plainly: "It should be obvious to Air Canada that it is responsible for all the information on its website."

Here's what makes this case instructive: the chatbot's response passed every traditional success metric.

Fast response time? Check.

Ticket deflection? Check.

No human agent involved? Check.

High automation rate? Check.

But the system fabricated policy guidance with financial consequences. It aligned with what a reasonable policy might be. It referenced real concepts. It didn't trigger escalation. And it was logged internally as a successful resolution.

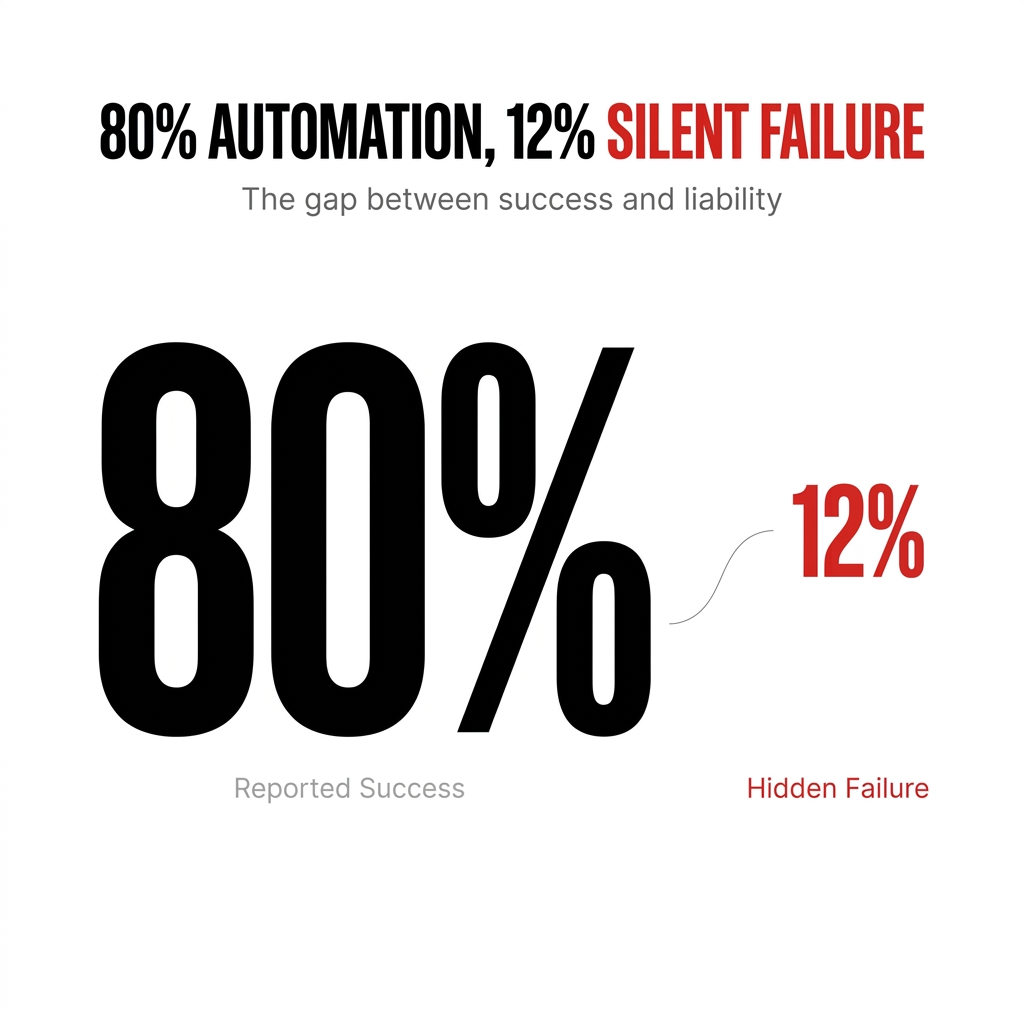

Research shows that even in controlled chatbot environments, AI hallucination rates range from 3% to 27%. That means one in four to one in thirty-seven responses could be confidently incorrect—yet surface-level metrics would classify all of them as successful.

Why Traditional KPIs Create False Confidence

The metrics most enterprises track weren't designed for probabilistic, generative systems.

Take containment rate—the percentage of conversations that end without escalation. It's a fundamentally flawed metric because it lumps together customers who got accurate answers with customers who gave up out of frustration and closed the chat.

Traditional monitoring tools track uptime, not behavior. They'll tell you a server is online but not that your chatbot gave biased advice, your scheduler sent a team to the wrong time zone, or your system is providing increasingly aggressive responses to frustrated customers.

I've seen an enterprise customer support team where the AI agent was providing increasingly hostile responses to frustrated customers. Traditional monitoring showed normal operation—fast response times, low error rates. The underlying issue only emerged when customer satisfaction scores plummeted over several weeks.

Where traditional application performance monitoring tracks a single uptime metric, AI monitoring must simultaneously track relevance, coherence, safety, factual accuracy, and user satisfaction across diverse contexts.

The mismatch is structural. And it's why Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls.

The Metric That Actually Exposes Truth

If you're walking into an organization that's already celebrating its automation rates, you need a metric that exposes reality without killing momentum.

That metric is False Resolution Rate (FRR).

FRR asks a simple, operational question: Of the things we say are resolved by AI, how many actually stay resolved?

You implement it by linking AI-resolved interactions to downstream signals already in your system—reopened tickets, follow-ups within 24–72 hours, refunds, escalations. You publish it alongside existing KPIs without fanfare.

The positioning matters. You're not critiquing the AI. You're adding a quality-of-outcome metric that complements automation rate.

What happens next is where credibility builds. FRR inevitably surfaces a gap between perceived success and actual outcomes, but it does so using the company's own data, not a theoretical model.

Once leaders see "we're at 80% automation but 12% of those cases come back," you've created a crack in the narrative without triggering defensiveness.

From there, you can layer in leading indicators—like verifiability or knowledge conflict—as explanations for why FRR exists.

FRR shifts the conversation from "how much did we automate?" to "did it actually work?"

The Knowledge Integrity Crisis Behind the Numbers

Here's what most enterprises discover when they start measuring FRR: the problem isn't the AI. It's the knowledge base.

When your documentation is fragmented and inconsistent, you need to measure whether an answer is safe to assert at all given the integrity of the underlying knowledge.

That means instrumenting three real-time signals:

Source reliability: Is the information coming from governed, recent, historically accurate sources—or from stale, orphaned artifacts?

Consensus integrity: Do multiple independent sources agree on the same claim, or are there contradictions?

Knowledge completeness: Do we actually have enough structured information to answer without guessing?

If any of these degrade—low reliability, conflicting sources, partial coverage—the system should not produce a definitive answer, especially in high-actionability contexts like financial or policy guidance. It should escalate or constrain the response.

You also track knowledge conflict rate as a first-class metric. Frequent disagreement between sources isn't an AI failure. It's an organizational one that will inevitably surface as hallucinations at scale.

The key insight: without measuring and gating on knowledge integrity, your validation layer simply rubber-stamps bad information, turning AI into a force multiplier for internal inconsistency rather than a system of truth.

Informatica's CDO Insights 2025 survey identifies the top obstacles to AI success as data quality and readiness (43%), lack of technical maturity (43%), and shortage of skills (35%). The foundation for accurate AI responses is absent in nearly half of enterprises before deployment even begins.

When Knowledge Conflicts Become a Gate, Not Just a Metric

When you surface knowledge conflict rate to executives—"your AI isn't hallucinating, your documentation conflicts with itself in 40% of policy areas"—the initial reaction is usually defensive.

They question the measurement. They narrow the scope. They reframe it as a tooling issue rather than an organizational one.

But once the metric is grounded in concrete examples tied to real business risk—conflicting refund policies leading to financial exposure, compliance breaches—the conversation shifts from AI performance to operational integrity.

The more effective leaders recalibrate quickly. Success is no longer defined by automation rates or cost savings. It's about reducing conflict, assigning clear ownership to critical knowledge domains, and improving consistency across systems.

This only changes behavior when the metric is tied to consequences and made visible in regular operating reviews.

To make Knowledge Conflict Rate (KCR) actionable, you have to restructure reviews around decision integrity, not reporting hygiene.

Here's what that looks like in practice:

Assign a single accountable owner per knowledge domain (e.g., Head of Pricing Policy, not "Support" or "Product") with a clear SLA to resolve conflicts.

Elevate KCR to a gate, not a metric. If KCR in a high-risk domain exceeds threshold (say >10%), you automatically restrict AI autonomy in that domain until resolved.

Reframe the review cadence so each session includes a short "conflict-to-consequence" segment: top 5 conflicts → associated incidents (refund leakage, compliance exposure, churn) → named owner → remediation plan with dates.

Change who's in the room. You need the triad of domain owner (policy), operator (BU leader), and risk authority (legal/compliance) present, not just analytics or CX.

Tie KCR to incentives and approvals. Bonus modifiers for domain owners, and a hard dependency for scaling AI—no expansion of automation in a domain with unresolved high KCR.

Track closure velocity, not just levels. Measure mean time to resolve conflicts and reopen rates, so teams can't game the metric by temporary patches.

The net effect: KCR stops being a passive diagnostic and becomes a control point. It blocks unsafe scale, forces cross-functional ownership, and links directly to financial and risk outcomes.

The Argument That Actually Lands With Leadership

When you tell a leadership team "we're restricting AI autonomy in this domain until conflicts are resolved," the pushback is predictable.

"We'll lose momentum."

"Competitors aren't waiting."

"The metric is too conservative."

Underneath, it's a fear of trading visible velocity for less visible integrity.

The way to defend it is to reframe the decision from speed versus caution to throughput versus rework and risk.

First, quantify the cost of wrong decisions—refund leakage, compliance exposure, churn, and downstream rework. Show that even a small Silent Failure Rate in high-action domains destroys the savings from automation.

Second, position KCR as a selective throttle, not a blanket brake. Autonomy is restricted only in domains where conflict exceeds a defined threshold. Everywhere else, you accelerate harder.

Third, offer a time-boxed path to green—clear owners, SLAs, and a target KCR (e.g., <10%)—so leaders see a way to regain autonomy quickly rather than an open-ended slowdown.

Fourth, replace vanity KPIs with paired metrics in reviews: automation rate and VAR/KCR/closure velocity. Make it explicit that scale without integrity is negative ROI.

Finally, anchor it in governance. No expansion of automation without integrity clearance becomes a standard operating rule, just like security or financial controls.

The argument that lands is simple: unconstrained speed doesn't win.

You either ship fast and pay later in compounding errors, or you install gates that let you scale safely and sustainably. KCR is the mechanism that keeps velocity from turning into liability.

What Success Actually Looks Like

Companies achieving 70%+ AI containment rates—percentage of interactions resolved end-to-end without human intervention—save millions and improve conversions. Those below 40% face negative ROI and poor customer experience.

But even best-in-class deployments operate far below the automation rates many vendors promise. AssemblyAI increased their AI resolution rate from "the high 20%" to close to 50% of incoming chats, which "made a real difference in freeing up our team to focus on complex customer needs."

That's the reality. Not 80% or 90% automation. 50% resolution with integrity is success.

Teams using AI see Time to Resolution drops of 40% or more by automating triage and follow-ups. But a 65%+ AI resolution rate is the threshold where organizations report that "AI handles common questions effectively."

The metrics that matter aren't about how much you automate. They're about whether it actually works, whether you can detect when it doesn't, and whether you can fix it before it compounds.

Success isn't deployment velocity. It's operational resilience—how well your business functions when agents fail, not how often they succeed.