Why Grocery Retail Keeps Repeating the Same Expensive Mistakes

Why Grocery Retail Keeps Repeating the Same Expensive Mistakes

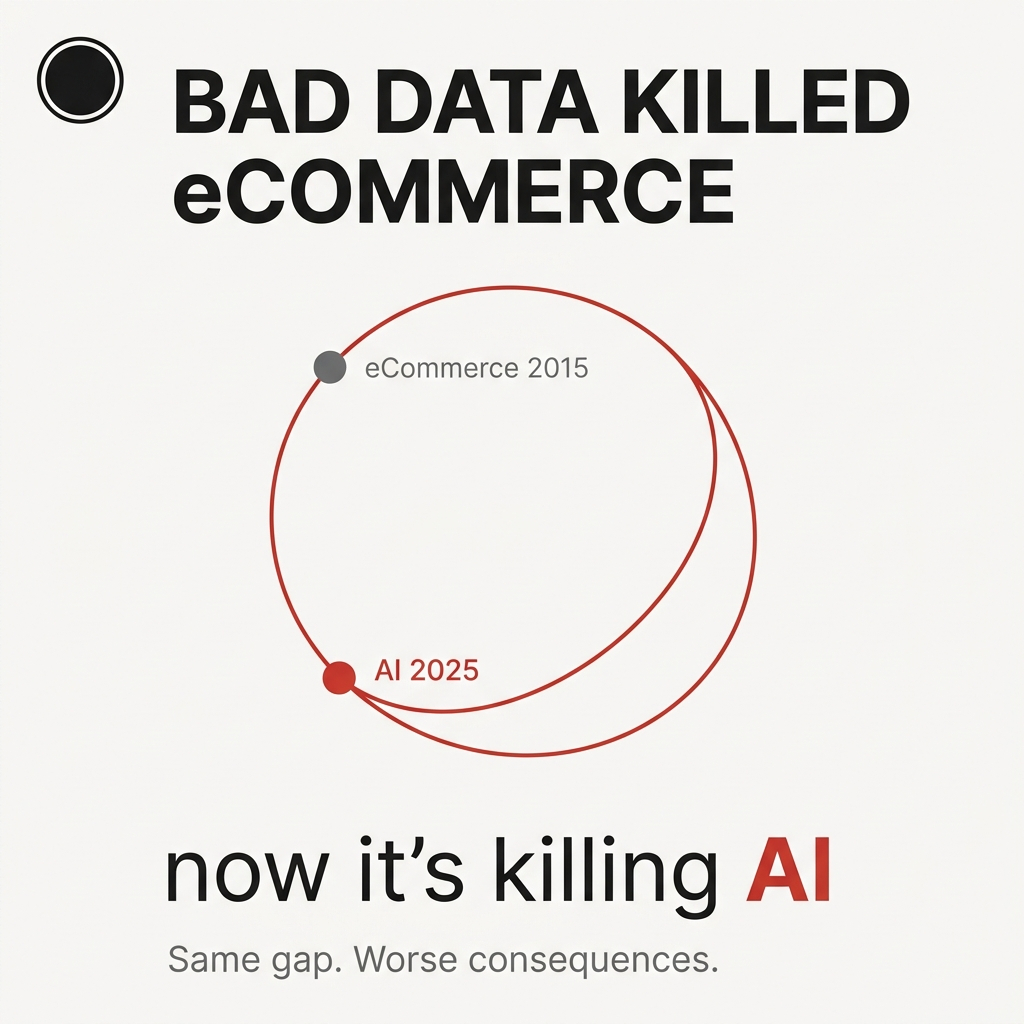

I've watched grocery retailers make the same mistake twice now.

First with eCommerce. Now with AI.

The pattern? Identical. The organizational dysfunction? Exactly the same. And the cost? Compounding with every month of delay.

When grocery retail shifted to eCommerce, the problems were obvious from day one: incomplete product attributes, missing images, broken taxonomy, pricing errors on variable-weight items. Suppliers sent inconsistent data with no enforced standards. Inventory systems didn't match what customers saw online.

Underneath all of this was the real issue. No one owned product data end-to-end.

Merchandising owned vendor relationships. IT owned the systems. eCommerce owned the customer experience. Suppliers provided the raw data.

No single person was accountable for data quality across the entire lifecycle.

So problems bounced around like a hot potato. eCommerce flagged bad images. IT pointed to upstream systems. Merchandising deferred to vendors. Vendors sent inconsistent updates with no enforcement.

Errors persisted indefinitely because fixing them was always "someone else's problem."

Sound familiar?

The Economic Pressure That Finally Forced Action

What finally forced retailers to address this wasn't insight.

It was pain.

As eCommerce moved from a side channel to a material revenue driver (accelerated by COVID), poor product data stopped being an inconvenience. Started hitting the P&L directly.

Lost conversions. Substitution failures. Higher refund rates. Degraded customer trust.

The numbers became impossible to ignore:

- Analysts estimate poor data quality impacts 15-25% of retail revenue

- Gartner found companies lose about $12.9 million annually due to bad data

For grocery retailers managing thousands of SKUs across multiple channels, these weren't abstract numbers. These were the direct cost of organizational dysfunction showing up in quarterly earnings calls.

Fixing this took years, not months.

Why? The problem wasn't technical. The problem was organizational.

Retailers had to:

- Standardize data models across legacy systems

- Enforce supplier compliance

- Implement PIM/MDM platforms

- Establish clear ownership and governance

This required cross-functional alignment, process redesign, and cultural change.

Third parties became critical. They brought the tooling, normalization frameworks, and scale internal teams didn't have.

The AI Déjà Vu

Now I'm watching the exact same pattern unfold with AI.

Frame by frame. Scene by scene.

Retailers are rushing to deploy AI copilots, search tools, and personalization layers. All of this sits on top of the same fragmented, inconsistent product data from eCommerce.

Same foundation. Different technology layer.

You're seeing early wins in low-risk use cases like chatbots and basic recommendations. This creates the illusion of progress.

Underneath? Nothing fundamental has changed.

No clear data ownership. No enforced standards. No unified view of product content.

The investment focus is skewed in a familiar way:

- Budgets go into tools, models, and infrastructure

- Not into fixing the data layer

- Teams still operate in silos

- Suppliers still send inconsistent data

- Governance remains an afterthought

The difference now? AI amplifies these weaknesses instead of masking them.

Bad data doesn't create friction anymore. Bad data creates confidently wrong outputs at scale.

A 2025 MIT study found 95% of enterprise AI pilots delivered no measurable profit and loss impact.

The core issue wasn't talent, infrastructure, or regulation. The core issue was the lack of integration and contextual adaptation rooted in poor data quality.

In other words: garbage in, confident garbage out.

When AI Turns Bad Data Into Dangerous Decisions

Here's what this looks like when the stakes get real.

Picture a retailer launching an AI-powered meal planner. A customer asks for "peanut-free lunches for my kids."

The system pulls from product data... looks complete on the surface. But allergen tags are inconsistent, ingredient lists are unstructured, and supplier naming varies between "peanuts" and "groundnuts."

The AI confidently returns a curated list labeled "safe." Includes items with hidden cross-contamination warnings or missing allergen flags.

Nothing breaks. No error is triggered.

But the outcome is dangerous.

A parent trusts the recommendation. A child has a reaction. The retailer now faces legal exposure, brand damage, and regulatory scrutiny.

The AI didn't hallucinate. The AI synthesized bad data and turned this into a high-risk, customer-facing decision.

This is the new risk profile.

Missing or incorrect attributes lead to regulatory failures, fines, or product recalls. Even minor errors damage brand reputation and erode customer trust.

Why Retailers Don't Internalize the Risk

When I talk to retailers about this, most don't fully grasp the risk.

At least not initially.

The typical response falls into two camps:

Camp 1: Acknowledgment without urgency.

They understand data quality matters, but treat this as a "phase two" problem. The focus remains on launching pilots, proving use cases, keeping pace with competitors. There's an implicit belief issues get cleaned up later.

Camp 2: Optimistic deferral.

"The models will get better." "We'll fine-tune this." "We'll put guardrails in place."

They assume the technology layer will compensate for weaknesses in the data layer.

What's missing? A shift in accountability.

Very few organizations stop and say: "We shouldn't be deploying customer-facing AI until we trust the underlying data."

This is the déjà vu. Move fast, patch later.

With AI, the consequences are amplified. The system doesn't expose bad data... the system acts on bad data with confidence.

And by the time you realize the foundation is broken, the damage is done.

What Separated Winners From Laggards in eCommerce

Some retailers did get their data house in order during eCommerce.

What separated them wasn't better technology... it was clear ownership and enforcement at the operating model level.

They did three things differently:

1. They established end-to-end accountability for product data.

Not shared responsibility. Single-threaded ownership. Someone was explicitly accountable for data quality, completeness, and standards across the entire lifecycle. From supplier ingestion to customer-facing channels.

2. They treated product data as a governed asset, not a byproduct of merchandising.

This meant defining canonical data models, enforcing attribute standards, and rejecting or quarantining supplier data when the data didn't meet requirements. Suppliers didn't dictate structure anymore. The retailer did.

3. They built operational mechanisms to enforce quality at scale.

PIM/MDM platforms, validation rules, data stewardship workflows, and KPIs tied to data completeness and accuracy. This wasn't a one-time cleanup. This became part of day-to-day operations with measurable accountability.

The difference wasn't intent... it was discipline.

And discipline is exactly what's missing in AI deployments today.

Why AI Allows Retailers to Believe They Don't Have to Learn

So here's the question: If discipline and centralized ownership were the answer for eCommerce, why aren't retailers applying the same playbook to AI readiness?

Because AI is being framed (and funded) as a technology initiative, not an operating model problem.

In eCommerce, the pain was immediate and visible. Broken search. Poor conversion. Customer complaints.

This forced alignment. The impact showed up quickly in revenue and customer experience.

With AI, the signals are more ambiguous.

Early demos look impressive. Pilots generate enough value to justify momentum. Leadership teams convince themselves they're making progress. Meanwhile, underlying data issues remain unresolved.

There's no forcing function.

Yet.

AI sits across IT, data, digital, and business units. Everyone is involved. No one owns the full stack from data quality to model output to customer risk.

There's a persistent belief better models, fine-tuning, or guardrails will smooth over inconsistencies.

Spoiler: they won't.

No executive wants to be seen as "behind on AI." Organizations prioritize launching over readiness. They assume governance gets retrofitted later.

The cost of bad data in AI is often indirect at first. Subtle inaccuracies. Degraded trust. Hidden risk. This doesn't hit the P&L as cleanly as a drop in conversion rate. So this is easier to ignore.

AI allows retailers to believe they don't have to apply the eCommerce lesson... at least in the early stages.

That's the trap.

The Window Is Closing Fast

For retailers who are behind right now, it's not too late.

But the window is narrower than most think.

What matters isn't adoption speed.

What matters is foundation readiness.

Retailers behind on AI tools? They catch up relatively fast.

What's much harder to catch up on: data discipline, governance, and operating model maturity. These are multi-year capabilities, not plug-and-play solutions.

Right now, we're in a transitional phase:

- Leaders like Walmart are pulling ahead quietly through foundational strength

- The majority are still experimenting

- This creates a temporary perception of parity

- The true gap hasn't fully materialized in the P&L

This creates a short but real opportunity.

Retailers who act now (by establishing ownership, enforcing data standards, and treating AI as a data problem) still have time to close the gap.

But you have to shift immediately from pilots to foundations. This is counter to how most organizations currently operate.

Because once AI moves from experimentation to core operational workflows (pricing, assortment, personalization, supply chain optimization), the advantage compounds.

Better data leads to better models. Better decisions. More data. Stronger feedback loops.

At that point, it becomes self-reinforcing. And the window closes.

If retailers spend the next 12-24 months optimizing pilots instead of fixing their data foundations, they won't only fall behind.

They'll be structurally locked out of competing at the same level.

The window is closing.

What to Do Tomorrow

If you realize you're behind, here's the first thing to do tomorrow.

Not in six months. Tomorrow.

Assign a single, accountable owner for product data quality. Give them authority to enforce standards across the organization.

Not a committee. Not shared responsibility. One person.

Because until someone owns it end-to-end, nothing else sticks.

This owner's immediate mandate isn't to fix all the data. The mandate is to establish control:

- Define what "good" looks like (a minimum viable set of required attributes: allergens, ingredients, unit of measure, images, pricing integrity)

- Identify where the biggest data gaps and risks are

- Start with high-impact categories like fresh, private label, and top-selling SKUs

Put in place hard gates:

- If supplier data doesn't meet standards, the data doesn't go live

- Or the data gets flagged and quarantined

At the same time, pause any expansion of customer-facing AI use cases until those minimum standards are enforced.

Don't stop AI entirely. Stop pretending the foundation is ready to scale.

This isn't a technology move. It's a control point.

The moment someone is accountable and empowered to say "no" to bad data, everything changes.

You shift from "we'll clean this up later" to "this is now part of how we operate."

Everything else comes after this. PIM systems. Governance frameworks. AI readiness.

Without the first step, nothing changes.

The Uncomfortable Truth

If you haven't started, you've already lost ground.

But ground losses get recovered.

The retailers who will win with AI aren't the ones moving fastest on tools.

They're the ones who applied the eCommerce lesson early: data first, ownership clear, governance enforced. Before scaling anything customer-facing.

For those who've already lost too much time, the path forward is clear:

Pick the right third party to help you catch up.

Here's what separates a real solution from an expensive band-aid:

Does this partner enforce a canonical data model and governance... or do they help you clean and move data faster?

Most third parties promise data enrichment, normalization, AI tagging, faster onboarding of supplier content.

This is the band-aid.

A real solution does something much harder.

A real solution:

- Defines a canonical schema (what "good" looks like, unambiguously)

- Maps all incoming data to the standard (doesn't pass data through)

- Enforces validation rules at ingestion (bad data is rejected or quarantined)

- Creates feedback loops with suppliers so quality improves upstream, not downstream

- Provides ongoing governance workflows, not a one-time cleanup project

If the partner makes your data look better, you've bought a service.

If they make your data behave consistently and predictably, you've built a capability.

That's the dividing line.

The goal isn't to clean your data once.

The goal is to ensure every new piece of data entering your system meets a standard you control.

Anything less delays the problem.

And makes your AI more confidently wrong.